When AI broke into our lives, many imagined it as a sort of all-purpose assistant, capable of handling any task thrown its way. Businesses paused to observe, with many slowing or even freezing hiring, while employees held their breath, worried about potential layoffs.

Today, the picture is much clearer: AI is far from being a full replacement for human roles. Meanwhile, the approach to work is being altered in line with the new realities, and proficiency with AI tools is now more valuable than ever.

Obviously, changes haven’t passed development teams by. Developers use AI for quicker and cleaner code writing, business analysts form the requirements with the tool’s assistance, and quality assurance engineers increasingly rely on it to draft test scenarios efficiently.

Testers were considered prime candidates for replacement by AI. However, as reality shows, these expectations turned out to be wrong, and the human eye is still necessary to ensure decent and qualitative test coverage of a product. Why did the expectations from AI happen to be inflated in the context of quality assurance? How do engineers use the tool in their work, where it helps, and where completely useless — read in our new blog post.

Key Highlights

- AI in testing is no more than any other engineering tool: it amplifies human capability but still depends entirely on clear context, domain understanding, and engineering oversight.

- True value emerges when AI supports testers in repetitive, data-heavy tasks, freeing them to focus on analysis, validation, and complex scenarios that require human judgment.

- AI-generated tests can speed up delivery and expand coverage, but without proper review, they risk becoming noise rather than assurance.

- The future of QA lies in synergy: testers who can guide, interpret, and refine AI outputs will set the standard for quality in the era of intelligent automation.

AI-Based Software Testing and How It Differs from the Traditional Approach

AI testing might be seen like something almost magical, as if you could just type in a few lines of text, and the system would instantly write perfect tests and find every bug. In reality, it’s much more down-to-earth, therefore, far more interesting. However, there’s one thing that has become crystal clear at the moment: artificial intelligence will hardly be able to replace humans. It’s here to become a tool that helps them do their work better and faster, but not more.

Traditional testing relies on explicitly defined scenarios, while the AI-based approach is based on data analysis, probability, and prediction. The machine doesn’t exactly know what to test; rather, it infers where issues are most likely to occur by recognizing patterns, analyzing logs, studying code structures, and learning from past defects. In that sense, AI testing resembles an experienced engineer’s intuition, with the only difference being speed.

However, it still lags in understanding. Tools like ChatGPT or specialized AI-based platforms are great at generating vast numbers of test scenarios, combining multiple input cases, and covering edge conditions.

But the quality of those tests depends entirely on the context and the prompts provided by the human. AI doesn’t truly “understand” what’s important for a specific product; it simply connects patterns. Without human review, tuning, and prioritization, AI-generated tests can easily turn into noise.

Explore the Specifics of Generative AI Models

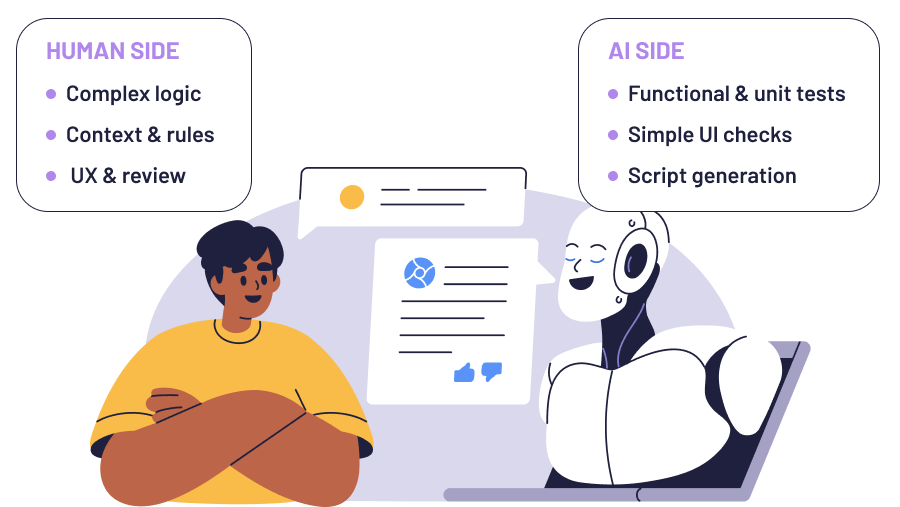

The real difference lies in how AI transforms the tester’s role. Instead of manually writing repetitive tests, testers become so-called architects of the testing process: defining logic, setting quality criteria, and managing the tools. This shift requires a new skill set — understanding how models learn, how to communicate with them, and how to verify their output.

Test case creation using AI is increasingly applied in software development, fintech, e-commerce, telecom, and large-scale SaaS projects. It’s particularly useful for projects with frequent releases, complex UIs, or repetitive functional checks where AI can quickly generate scripts while humans focus on critical logic, edge cases, and UX considerations.

Context Issues and Why It’s the Biggest Headache of AI Testing

The biggest pain point in AI-based testing is context. The thing is that the machine has no idea what exactly you need until you spell it out in detail. You can’t just ask “write a test for authentication”: it doesn’t know what system you’re working with, what the business rules are, what the interface looks like, or what counts as a success or a bug. Without context, it’s blind and totally lost.

Working with AI isn’t like delegating a task to an experienced colleague; it’s more like onboarding a brand-new, random hire from the street and trying to get them up to speed in just several hours. They might be smart and diligent, but until they grasp the specifics of the project, they’ll hardly be able to deliver an acceptable result.

That’s why AI testing never starts with test generation, but with careful context setup: defining what’s being tested, in what environment, with which tech stack, rules, and dependencies.

And that’s where the frustration begins. If your context is incomplete, AI produces real bullshit: shallow tests, generic checklists, and overly abstract scenarios. As a result, you end up spending more time reviewing, correcting, and re-prompting than it would take to just write the tests yourself, especially if the case involves manual testing scenarios.

But once you give AI something to build from, say, project documentation, an existing test suite, a bit of code, or even an HTML snippet, you might count on a more or less adequate result. If it has real material to anchor to, it can generate decent tests: find locators, plug them into scripts, outline scenarios, and even propose structure. In that setup, the tester becomes more of an editor, reviewing and organizing the output.

The real challenge is that context doesn’t fit into a single prompt. You have to decide which part to feed it, how to optimize the input, and how to structure the project around it. One test isn’t enough here. You need a whole test suite, a clean architecture, and readable and reusable code. That takes skill, experience, and engineering thinking — things AI can’t have by its nature.

Testing Tasks AI Resolves at a Canter, and What’s Better to Leave at Human’s Mercy

As you can see from the previous paragraph, qualitative context means much, but providing it to an AI model takes time. That’s why one of the common traps in AI testing is realizing that quite often you spend more time explaining what to do than you would writing the test yourself. And that’s perfectly true!

There are tasks where AI-powered test automation just doesn’t pay off. It’s like that meme: you can spend 15 minutes doing it manually, or four hours automating it, and we all know what most people would choose.

For example, if you want to use AI for test case generation, remember that for complex scenarios it’s often faster to do it manually. For an AI model to write meaningful tests, it needs to “live through” the full context — understand the architecture, the business logic, the system constraints. That means dozens of clarifying prompts, reviews, and edits. But what expects you in the end? Tests you’ll still end up rewriting.

For AI-based test case development, focus on coverage, defect detection, and reliability. Track how much of the code or feature set is covered, whether AI catches real issues versus false positives or negatives, and how much human effort is needed to review and refine the tests.

Also consider time savings and how easily AI-generated tests integrate into existing suites and CI/CD pipelines. These metrics help ensure AI delivers meaningful value without compromising product quality.

Read if QA Automation Is a Must for Impeccable App Quality

As for what AI does well, it is basic, functional, and unit testing — simple checks with predictable outcomes. That’s its so-called comfort zone. All you need to do is feed it an HTML snippet and a short test description, like: “Open the login page, enter credentials, verify that the dashboard loads.” Within a minute, you’ve got a usable test script, perhaps still not a perfect one, but good enough to run after a quick review.

We tried this approach when we were working on our Velvetel product, our contact center solution. Previously, simple UI checks, like call validation or playback testing, were written manually. Later, we shifted these responsibilities to AI: grabbed the HTML fragment of a page, pasted it into a prompt, described the scenario, and the expected result. The AI found the locators, wrote the test, and the output was almost production-ready.

It sounds simple, and it is to a certain point. Starting a project from scratch still requires effort, but even there, AI can assist you: walking you through setup steps, configuration, imports, and dependencies. From our own experience, building a working project and test suite manually would have taken around three months. With AI, it took about sixteen hours — and the result wasn’t a toy example, but a real, extendable project.

Discover Top AI Copilot Use Cases

Garbage in — Garbage Out. Things to Remember When Striving to Ensure Impeccable Test Quality

When it comes to the quality of AI-powered test generation, everything boils down to one simple principle: garbage in — garbage out. Whatever you feed in is exactly what you’ll get back. No matter how smart and powerful the model seems to be, if your prompt is vague, poorly structured, or lacks context, the output will reflect that.

The first step to avoiding this trap is setting clear boundaries and context. Define precisely what’s being tested, in which environment, using what tech stack, and with which assumptions and criteria. It also helps to assign the model a role, for example: “Act as a senior QA engineer specializing in automated tests in TypeScript using Playwright.” This frames the AI’s thinking, nudging it toward established approaches and best practices used by real seasoned QA professionals.

But even with the perfect prompt, mistakes might happen. AI can mix up locators, skip page load waits, or invent fields that don’t exist. Reviewing non-human-written tests remains an essential part of the process, and experience of interaction with AI matters here: the more you work with such tests, the better you get at spotting the weak points, whether in logic, data, or timing.

Sometimes the model simply “fills in the blanks” on its own, and only god knows for what reason. For instance, when we were trying to rewrite one script from PostgreSQL to MySQL, the model renamed the full name column to name for no reason, breaking the whole script. Seemingly minor thing, but big consequence, and sometimes it’s easier and faster to identify the error and fix it manually than to spend dozens of prompts trying to explain to the model which kind of mistake it had made.

That’s why achieving real quality isn’t about trusting AI blindly, but about collaborating with it. You give it a precise, well-structured input — it produces a working draft. Then you review, refine, and bring in your own engineering judgment. Therefore, the responsibility for quality still lies with you.

Risks and Complexities of AI-Driven Tests

When it comes to using AI in software testing, the first thing to keep in mind is attentiveness: what you feed it and to whom you’re feeding it. Many AI systems can reuse the data you provide for training, and in the worst case, that information might resurface in unexpected contexts. It’s not only about data leakages; sometimes it’s about the model unpredictably reusing parts of your prompts in other responses.

The second category of risks involves the quality and reliability of the output. If you skip reviewing what the AI produces, you can easily end up with a test that looks valid but actually checks the wrong thing, or even worse, passes critical bugs into production. The rule here is simple: AI generates — human reviews. This is an axiom.

Take a look at Generative AI Risks and Regulatory Issues

If reviewing takes less time than writing the test from scratch, that’s great and means that the tool is doing its job great. If it takes longer, something’s off: either in the prompt or in task formulation.

It’s also important to understand that the risk level depends on what exactly you’re generating. When AI writes automated tests, the consequences of its mistakes are usually contained: the test either passes or fails without touching the core business logic.

But when AI generates production code, that’s a whole different story. You can run into conflicting validations, logical contradictions, or architectural flaws — the same types of issues a human developer might introduce. The difference is that AI’s mistakes can be subtler because “it looks fine.”

In AI in test case management, verification starts with reviewing the AI-generated tests against expected behavior, business rules, and system constraints. Validation involves running the tests in a controlled environment to check accuracy, catch false positives or negatives, and ensure they don’t disrupt the system.

Human oversight is still of critical importance: AI can draft tests quickly, but meaningful and safe coverage depends on careful review, refinement, and context-aware adjustments.

Final Thoughts

AI is just a tool, and like any tool, it’s only as effective as the person using it. A hammer won’t drive nails by itself — it works in the hands of someone who knows what they’re doing. That’s why the real question here isn’t whether AI-based test generation will fully replace QA engineers, but how quickly testers will learn to use it properly.

Looking for a partner who truly understands how to put AI to work? Our team of skilled developers and QA engineers is ready to help you build robust, high-performing, and secure solutions that stand the test of time.